10 Signs your organization is ready for agentic AI development

FEB. 2, 2026

5 Min Read

You’re ready for agentic AI when your delivery system is already disciplined.

Agentic AI software development puts software changes, tests, and operational actions behind tools that a model can use. That can cut cycle time, but it also raises the cost of weak controls because the agent will act at machine speed. You get value when the agent has clear boundaries, clean inputs, and a safe place to operate. You get noise and risk when those basics are missing.

Agentic AI readiness is not a feeling or a vendor demo result. It’s a set of observable conditions in your teams, platforms, and governance. If you can verify those conditions, you can start with small, low-risk tasks and expand from there. If you cannot, the right move is to fix the delivery system first.

key takeaways

- 1. Agentic AI readiness is proven through delivery discipline, tight access, and measurable production risk, not interest or tooling demos.

- 2. Clear success metrics and strict guardrails keep agents useful and safe, with least-privilege permissions and auditable actions as the baseline.

- 3. Start with a narrow workflow and expand only after stable results, fixing CI/CD, testing, and governance gaps before choosing build or buy.

Confirm your agentic AI readiness before funding development work

Agentic AI is a fit when you can constrain what an agent can access, what it can change, and how you can reverse it. Readiness means your work is already broken into small units, your software supply chain is controlled, and production risk is measurable. If your team struggles to ship safely without agents, adding them will multiply the same failure modes.

Start with a simple test you can run with leadership in the room. Ask what the agent would touch for a single task: repos, tickets, secrets, build systems, and runtime systems. If any of those are “everyone has access” or “we’ll sort it out later,” hold funding until basic controls and ownership are in place.

Set success metrics and guardrails for software development agents

Good outcomes come from clear targets and strict guardrails, not from giving agents broad power. Your metrics should reward safer delivery, not just more code written. Your guardrails should keep humans accountable for approvals, access, and risk acceptance. This makes evaluating agentic AI adoption much simpler because you can see impact in delivery data.

- Lead time for small changes stays predictable

- Change failure rate does not rise

- Escaped defects trend down release over release

- Mean time to restore service remains low

- Policy violations stay near zero

Guardrails should be concrete: least-privilege service accounts, tool allowlists, human review on writes to main, and audit logs for every action. Separate “agent suggests” from “agent applies” until you trust the workflow. Tie agent permissions to the same controls you already use for people, then tighten them further.

Agentic AI readiness checklist with 10 signs you qualify

Use this agentic AI readiness checklist as a go or no-go gate. Each sign is something you can validate in your delivery system, not a claim about model quality. Passing more signs means you can start with higher-impact AI agents for software development. Failing several signs means your first wins will come from strengthening basics.

"If security is an afterthought, your first incident will become your operating model."

1. Teams ship in small batches with clear ownership

Small batches keep agent work scoped, reviewable, and easier to roll back. Clear ownership means someone is accountable for what the agent changes and how it gets tested. Look for thin pull requests, stable release cadence, and named owners for services and repos. If ownership is unclear, agents will amplify confusion and slow approvals.

2. Source code and artifacts have strong access controls

Agents need access, but they should not get the keys to everything. Strong controls include code owners, protected branches, signed builds, and audit logs for writes. Treat agent identities as service accounts with short-lived credentials and tight scopes. If repo access is wide open, agent work will create compliance and supply chain risk.

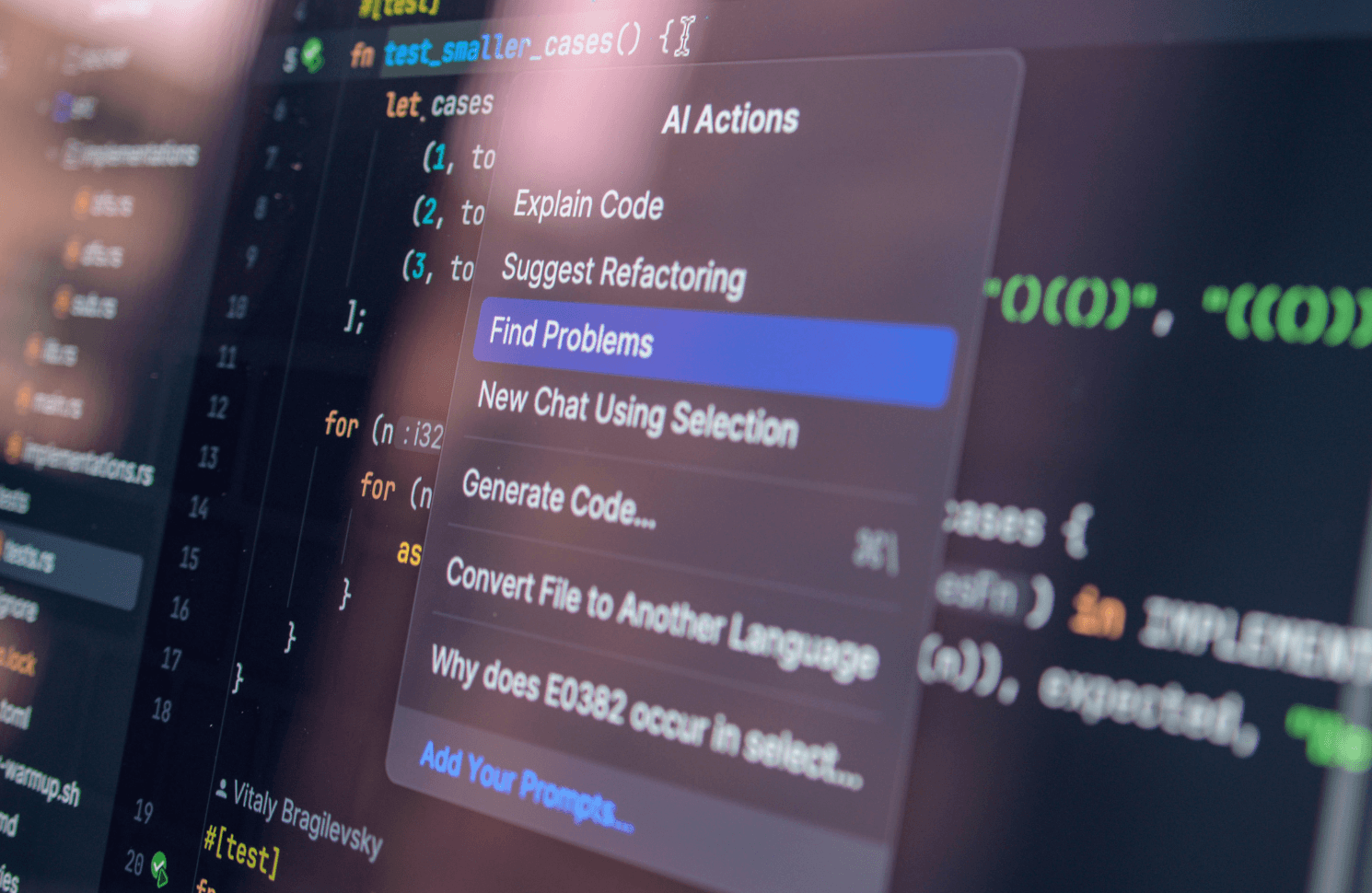

3. CI/CD and testing are stable and well-instrumented

Stable CI/CD gives the agent fast feedback and blocks unsafe changes before merge. Flaky tests and slow pipelines create false signals that waste tokens and engineer time. A concrete example is an agent updating a dependency, running unit tests, then opening a pull request only after the pipeline stays green. If you can’t trust pipeline results, you can’t trust automated changes.

4. Backlogs are groomed with consistent acceptance criteria

Agents perform best when tasks have crisp definitions and measurable outcomes. Consistent acceptance criteria turns vague work into testable work that a tool can verify. Check that tickets include inputs, expected behavior, and constraints such as security or latency targets. If stories are fuzzy, the agent will fill gaps with guesses and rework will spike.

5. Architecture has clear boundaries and updated documentation

Clear boundaries help an agent stay inside safe zones and avoid cross-service breakage. Updated documentation limits context gaps, especially around APIs, data contracts, and service ownership. Look for stable interfaces, modular code, and docs that match what runs in production. If architecture is tangled, agents will produce changes that compile but fail in practice.

6. Observability covers logs metrics traces and error budgets

Observability is how you verify that an agent’s change helped and did not hurt. Logs, metrics, and traces should point to the cause of failures without a long war room. Error budgets give leaders a shared way to judge when automation should slow down. If you lack these signals, you’ll argue about impact instead of measuring it.

7. Data and secrets management are centralized and audited

Central secrets management prevents tokens from leaking into repos, tickets, or prompts. Auditing shows who accessed what and when, including non-human accounts. Data access should follow classification rules so agents don’t pull sensitive records into their working context. If secrets live in many places, agent rollouts will stall in risk reviews.

8. Security reviews cover prompt injection and tool misuse

Agentic workflows add new attack paths through prompts, tools, and retrieved context. Security reviews should cover prompt injection, unsafe tool calls, and data exfiltration paths. Require threat modeling for each tool the agent can call, not just the model itself. If security is an afterthought, your first incident will become your operating model.

9. Sandboxes exist for safe agent execution and rollback

Sandboxes let agents run commands, generate changes, and test outcomes without touching production systems. Rollback needs to be routine, not a heroic recovery step. Look for ephemeral runners, isolated test setups, and clear release reversal steps. If your rollback path is weak, agents will raise the business cost of every mistake.

10. Leaders fund change management and new operating roles

Agents change how work gets assigned, reviewed, and approved, so leadership must fund the people side. New roles often include agent workflow owners, policy owners, and platform owners for tool access. Training matters because engineers need to verify agent output and manage exceptions. If leadership wants speed without investing in process updates, adoption will stall.

| Readiness sign | What it tells you |

|---|---|

| Teams ship in small batches with clear ownership | Agent work will stay scoped and review will stay fast. |

| Source code and artifacts have strong access controls | Agent permissions can be limited and fully audited. |

| CI/CD and testing are stable and well-instrumented | Automated checks will block unsafe changes reliably. |

| Backlogs are groomed with consistent acceptance criteria | Agent tasks will be clear enough to verify objectively. |

| Architecture has clear boundaries and updated documentation | Agents will have enough context to avoid cross-cutting breakage. |

| Observability covers logs metrics traces and error budgets | You can measure impact and spot regressions quickly. |

| Data and secrets management are centralized and audited | Sensitive access will stay controlled during agent workflows. |

| Security reviews cover prompt injection and tool misuse | New attack paths will be addressed before rollout. |

| Sandboxes exist for safe agent execution and rollback | Agent actions can be tested safely and reversed cleanly. |

| Leaders fund change management and new operating roles | People and process changes will keep pace with tooling. |

Fix common blockers before adopting AI agents for development

The biggest blockers are rarely model limits. Most teams get stuck on flaky tests, unclear ownership, weak access controls, and missing audit trails. Start with one constraint that reduces risk across every agent use case, such as branch protections or secrets centralization. Those changes also improve delivery even if you pause agent work.

Teams at Lumenalta often see the same pattern when delivery is under pressure: leaders want faster throughput, but the system can’t prove what changed and why. Focus on reducing variance first, then add agents to the stable workflow you already trust. You’ll spend less time in approvals and more time shipping verified work.

"Agentic AI readiness is not a feeling or a vendor demo result."

Choose build or buy and set your rollout plan

The main difference between building and buying is where you want ongoing control to sit. Buying usually gives faster setup and a narrower set of supported tools, which can reduce early risk. Building gives deeper integration with your repos, ticketing, and internal platforms, but it also adds maintenance and security ownership. The right call depends on how unique your workflow is and how much policy you must enforce.

Pick one workflow with clear value and low blast radius, then expand only after the metrics stay healthy. Start with read access, then limited write access with human approval on merges, then tighter automation once rollback and audit are routine. Keep the work boring on purpose, because boring work is easier to verify at scale. When you want help designing that rollout without handing over control, Lumenalta can work alongside your teams to set permissions, guardrails, and measurements that match how you ship.

Table of contents

- Confirm your agentic AI readiness before funding development work

- Set success metrics and guardrails for software development agents

- Agentic AI readiness checklist with 10 signs you qualify

- 1. Teams ship in small batches with clear ownership

- 2. Source code and artifacts have strong access controls

- 3. CI/CD and testing are stable and well-instrumented

- 4. Backlogs are groomed with consistent acceptance criteria

- 5. Architecture has clear boundaries and updated documentation

- 6. Observability covers logs metrics traces and error budgets

- 7. Data and secrets management are centralized and audited

- 8.Security reviews cover prompt injection and tool misuse

- 9. Sandboxes exist for safe agent execution and rollback

- 10. Leaders fund change management and new operating roles

- Fix common blockers before adopting AI agents for development

- Choose build or buy and set your rollout plan

Want to learn how Lumenalta can bring more transparency and trust to your operations?