How AI tools are reshaping developer experience

MAR. 24, 2026

4 Min Read

AI tools shift developer experience from typing code to steering outcomes.

That shift matters because the bottleneck is moving from keystrokes to judgment: picking the right intent, supplying the right context, and catching failure early. A field experiment with 758 professionals found generative AI support cut task time by 25% and raised quality by 40%. Teams that treat this as a workflow redesign will see compounding gains. Teams that treat it as “faster autocomplete” will buy speed and rent risk.

Tech leaders and executives should evaluate AI developer experience the same way they evaluate any delivery investment: throughput, defect rates, cycle time, and audit posture. The practical question is not which model is best. The practical question is how your engineering system will absorb AI without losing reliability, security, or ownership of the code you ship.

key takeaways

- 1. AI developer experience improves when you standardize steering inputs, verification, and review so speed does not raise rework or defect risk.

- 2. Best results come from AI support across planning, coding, review, and release, with shared context and audit trails that match how teams already ship.

- 3. Start where outcomes are measurable, invest first in tests and refactoring, then scale tool use with clear guardrails for security, compliance, and IP.

AI developer experience moves work from writing to steering

AI developer experience improves when developers spend less effort producing text and more effort steering intent and constraints. Your team will write fewer lines manually, but will spend more time defining acceptance criteria, reviewing generated diffs, and verifying behavior. The highest value shifts to clarity, not velocity. Steering also forces better engineering hygiene because weak specs produce weak output.

This creates a new “control surface” for delivery: prompts, repo context, test signals, and review gates. When that surface is consistent, developers feel faster and calmer because the next step is always clear. When that surface is messy, developers feel whiplash because they must re-check everything the tool produced. The win comes from standardizing how work enters the system and how it exits.

Leaders should also expect different skill gaps than past tooling waves. Prompt craft is useful, but system thinking matters more: what inputs lead to reliable outputs, and what checks stop bad code before it ships. Hiring, onboarding, and performance goals will need to reward validation and design, not raw output. That is the simplest way to keep speed from degrading quality.

"Judgment is the long-term differentiator."

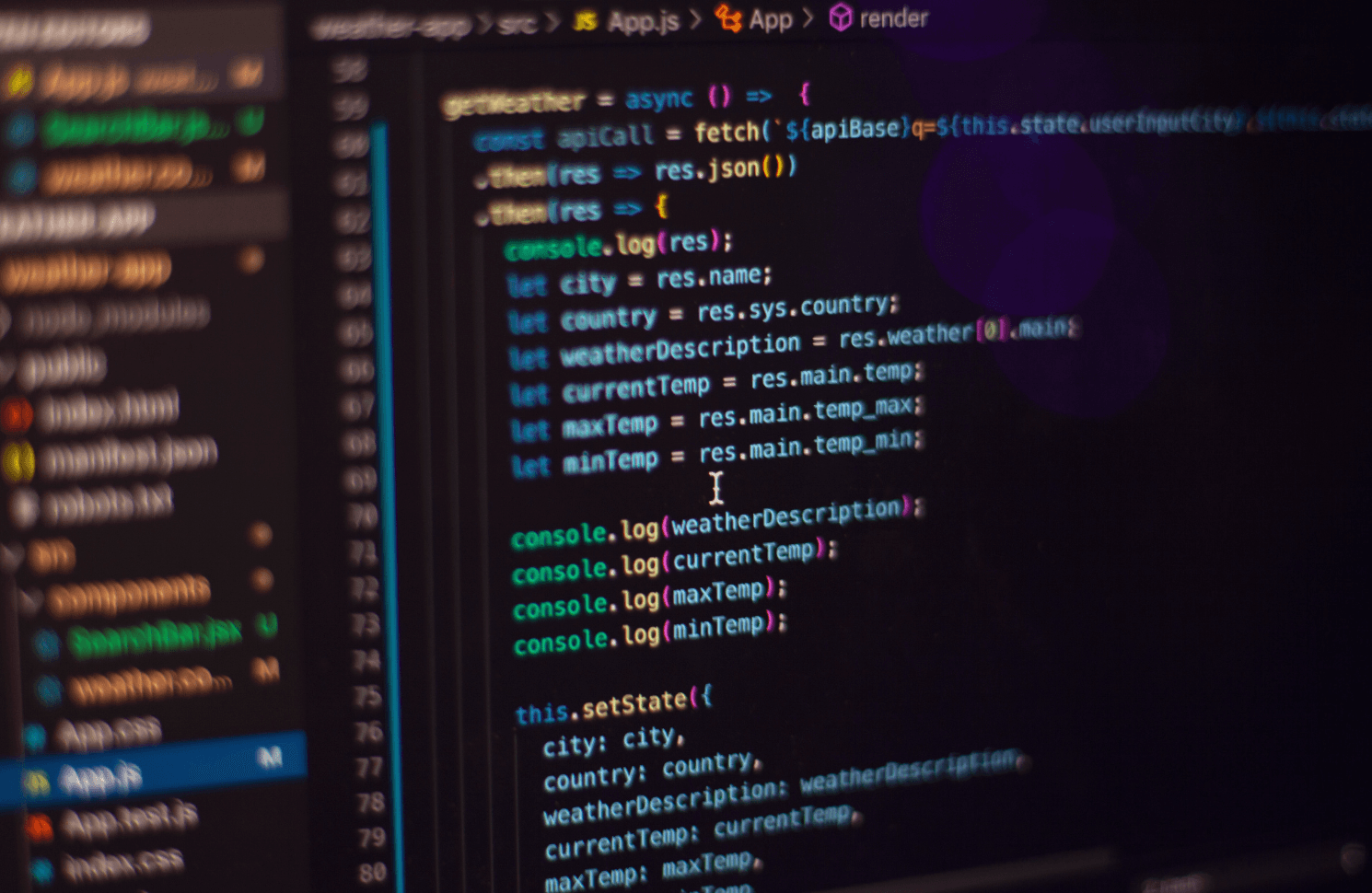

Vibe coding tools turn intent into code and tests

Vibe coding is a workflow where you express intent in plain language and iterate until the code feels right, instead of specifying every detail up front. Vibe coding tools turn that intent into scaffolds, implementation, and tests, then revise fast as you adjust goals. This feels productive because feedback is immediate. It also raises the bar for how you define “done.”

The practical definition leaders should use is narrow: vibe coding works best when the problem has clear boundaries and quick verification. The tool is acting like a junior collaborator that can draft, refactor, and propose options, but it cannot own correctness. If your team cannot state inputs, outputs, and nonfunctional needs in a few sentences, the vibe will produce confident-looking code with hidden gaps.

Strong teams treat vibe coding as a front-end to disciplined engineering. They keep specs lightweight but explicit, they require tests as part of the same work unit, and they insist on readable diffs that a reviewer can reason about. The productivity comes from compressing the “blank page” step, not from skipping review. That distinction is what keeps vibe coding useful after the novelty wears off.

AI-powered workflows across planning, coding, review, and release

AI-powered developer workflows work when AI is placed across the full delivery loop, not only inside the editor. Planning gets faster when intent is captured as crisp requirements and risks. Coding gets faster when scaffolds and refactors are cheap. Review and release get safer when checks are automated and explanations are easy to audit.

A concrete workflow looks like this: a team needs to add a cancellation endpoint to a subscription service with strict audit rules. AI drafts a short requirements note and proposes edge cases, then generates the endpoint skeleton and a first pass at unit tests. During review, the tool summarizes the diff, flags likely null handling issues, and suggests tighter error messages. After release, it helps write a runbook update and a monitoring query so on-call can verify the new path behaves correctly.

That workflow only works when you treat AI as part of the system of record, not a side channel. Requirements notes, test intent, and release criteria must live where the team already works, or the process splinters. Auditability matters too, because leadership will need to explain how code was produced and verified. Teams that connect AI to planning, review, and operations will see more stable gains than teams that focus only on generation.

Where to focus first for measurable team productivity gains

The best starting point is the part of the workflow where delays and rework are most expensive. Software defects are not just an engineering problem, they are a cost structure problem. A NIST study estimated software errors cost the US economy $59.5 billion each year. AI adoption that increases rework will burn savings and trust.

Use a simple sequence so the team learns safely and you can measure impact without guesswork.

- Standardize “definition of done” language so prompts and reviews match

- Start with test generation and refactoring before net new features

- Instrument cycle time and defect escape rate for the same services

- Align review gates so generated diffs get the same scrutiny

- Create a shared prompt and context library tied to coding standards

Each step creates a stable feedback loop. Tests and refactors are safer because the blast radius is smaller and verification is clearer. Measurement keeps the discussion grounded in outcomes, not opinions about tool quality. Once those basics are steady, expanding to planning support and release automation becomes straightforward.

"Teams that treat this as a workflow redesign will see compounding gains. Teams that treat it as “faster autocomplete” will buy speed and rent risk."

Choosing tools using accuracy, context, cost, and integration fit

Tool selection should follow the work, not the demo. Accuracy matters, but accuracy without context will still produce waste. Cost matters, but cost without adoption will still produce waste. Integration fit matters most because it decides if the workflow is coherent or fragmented.

Run evaluations on your own repos with tasks that resemble production work, then score results against the same rubric across teams. Treat privacy, data residency, and logging as first-order requirements. If the tool cannot respect your access model, you will either block it or accept risk. If the tool cannot plug into your existing review flow, developers will route around it.

| What you evaluate | What “good” looks like in practice |

|---|---|

| Correctness under constraints | The tool respects acceptance criteria and does not invent unsupported behavior. |

| Context handling for your codebase | The tool uses repo conventions and shared libraries without constant re-prompting. |

| Explainability for reviewers | The tool can summarize diffs and rationale so review time drops without risk. |

| Operational cost control | Usage limits, reporting, and budgeting are clear enough for finance oversight. |

| Integration with identity and logging | Access is enforced per repo and activity is auditable for compliance needs. |

Guardrails for security, compliance, and IP in assisted coding

Guardrails make AI assistance safe enough to scale across teams. Security needs to cover inputs and outputs, not just the final code. Compliance needs traceability for who requested what and what was accepted. IP protection needs clear rules for what data can be sent and what code can be reused.

Start with a policy that developers can follow without slowing down. Access control should match your repo permissions, and logs should capture prompts, references, and generated diffs for later review. Secure coding checks must run the same way for assisted code as for manual code, with consistent thresholds and exceptions. If an exception process exists, it should be visible and time-bound.

Execution matters because guardrails are operational work, not paperwork. Lumenalta teams often implement guardrails as part of the delivery pipeline so developers see the same checks every time and security teams get the audit trail they need. That approach reduces debates and keeps focus on shipping. A tool that feels “free” but produces governance debt will cost more than it saves.

Developer experience trends shaping platform strategy over next 18 months

The main trend is consolidation around workflows, not a growing pile of assistants. Teams will standardize a small set of AI touchpoints that map to planning, coding, review, and release. Developer experience will be judged by reliability and trust, not novelty. Platform owners will win when they treat AI as a controlled capability with clear contracts.

Vibe coding will stay useful, but only inside boundaries that protect quality. Steering will become a core engineering skill, and your best developers will spend more time writing constraints, tests, and review notes than raw implementation. Leaders should treat that as progress because it moves effort toward correctness and maintainability. The fastest teams will be the ones that can verify work quickly, not the ones that can generate the most code.

Judgment is the long-term differentiator. A disciplined operating model keeps the benefits of AI developer experience while limiting new failure modes. When Lumenalta supports leadership teams on this shift, the work centers on measurable delivery outcomes, clear guardrails, and a workflow that developers will actually use. That combination produces confidence you can defend in front of security, finance, and the board.

Table of contents

- AI developer experience moves work from writing to steering

- Vibe coding tools turn intent into code and tests

- AI-powered workflows across planning, coding, review, and release

- Where to focus first for measurable team productivity gains

- Choosing tools using accuracy, context, cost, and integration fit

- Guardrails for security, compliance, and IP in assisted coding

- Developer experience trends shaping platform strategy over next 18 months

Want to learn how Lumenalta can bring more transparency and trust to your operations?